Integrated Development of Computer Graphics and Vision

Introduction

In today’s digital era, the integrated development of computer graphics and vision, as a key area that promotes scientific and technological progress, is increasingly attracting widespread attention. From virtual reality to augmented reality, from special effects in movies to medical image processing, the integrated development of computer graphics and vision has profoundly changed the way people live and work. This forum will explore the intersection technologies and applications of computer graphics and computer vision. Computer graphics and computer vision are two closely related fields, and their integration will produce new innovative technologies. This forum will explore how to combine computer graphics and vision technology to achieve deep integration of the virtual and real worlds. From smart glasses to interactive projections, visual fusion technology is leading the future of human-computer interaction. In addition, the fusion of computer graphics and vision also has broad application prospects in fields such as medical imaging and industrial design. This forum will discuss how to use 3D visualization technology to assist doctors in surgical planning and simulation, and how to use virtual prototype and digital twin technology in industrial design to accelerate product innovation and development process. This forum will gather experts from academia to discuss the cutting-edge developments in the integration of computer graphics and computer vision, as well as specific application practices.

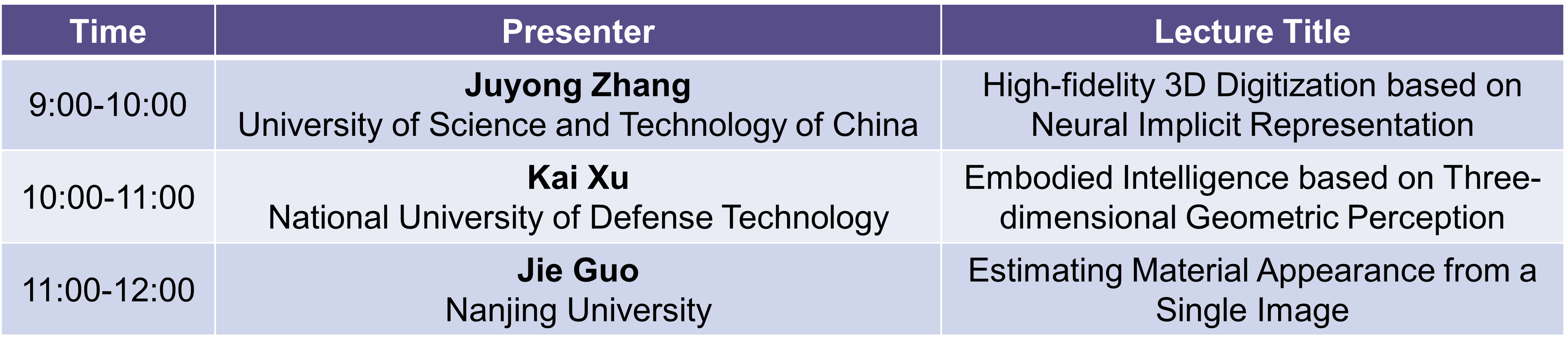

Schedule

Sept. 22th 9:00-12:00

Organizers

Weiwei Xu

Zhejiang University

Biography:Weiwei Xu is currently a tenured professor of the State Key Laboratory of CAD&CG, School of Computer Science and Technology, Zhejiang University, and a Changjiang Scholar of the Ministry of Education. He used to be a postdoctoral fellow at Ritsumeikan University in Japan, a researcher in the Network Graphics Group at Microsoft Research Asia, and a distinguished professor of Zhejiang Qianjiang Scholar at Hangzhou Normal University. His main research direction is computer graphics, covering 3D reconstruction, deep learning, physical simulation, and 3D printing. He has published more than 80 papers in high-level academic conferences and journals at home and abroad, including more than 40 CCF-A papers such as ACM Transactions on Graphics, IEEE TVCG, IEEE CVPR, and AAAI. Obtained 15 patents authorized by China and United States. The developed 3D registration and reconstruction technology has been applied in high-precision scanners and human body 3D reconstruction systems. In 2014, he was funded by the National Science Fund for Distinguished Young Scholars, hosted a key project of the National Natural Science Foundation of China, and won the second prize of the Zhejiang Provincial Natural Science Award.

Lecturers

Juyong Zhang

University of Science and Technology of China

Biography:Juyong Zhang, a professor at the School of Mathematical Sciences at the University of Science and Technology of China, received funding from the National Oustanding Youth Foundation and the Excellent Membership of the Youth Innovation Promotion Association of Chinese Academy of Sciences. In 2006, he graduated from the Department of Computer Science, University of Science and Technology of China. In 2011, he graduated from Nanyang Technological University, Singapore. From 2011 to 2012, he worked as a postdoctoral researcher at Swiss Federal Institute of Technology Lausanne. His research field is computer graphics and 3D vision. His recent research interests are efficient and high-fidelity 3D digitization of the real physical world based on neural implicit representation, inverse rendering and numerical optimization methods, and the creation of high-realistic virtual digital content.

Lecture Title:High-fidelity 3D Digitization based on Neural Implicit Representation

Abstract:Efficient and high-precision three-dimensional reconstruction of people, objects, and scenes in the real physical world is a core research issue in the fields of computer graphics, three-dimensional vision, and other fields. Traditional 3D vision and 3D reconstruction usually include multiple steps such as depth acquisition, point cloud registration, and grid reconstruction. The cumbersome technical processes and requirements for hardware equipment make high-fidelity 3D reconstruction and presentation unable to be as popular as 2D images. In recent years, neural implicit functions represented by neural radiation fields (NeRF) have made great breakthroughs in new perspective synthesis and high-precision three-dimensional reconstruction with their powerful fitting expression capabilities and differentiability. In this report, I will introduce the concept of neural implicit representation, various improvements, and its applications in the reconstruction of digital people, objects, and large scenes.

Kai Xu

National University of Defense Technology

Biography:Kai Xu is a professor of National University of Defense Technology, funded by the National Science Fund for Distinguished Young Scholars. He is also a visiting scholar at Princeton University. His research directions are computer graphics, 3D vision, robot perception, digital twins, etc. He has published more than 80 CCF A papers, including 29 papers on SIGGRAPH, the top conference on computer graphics. He serves on the editorial board of top international journals such as ACM Transactions on Graphics. He serves as the co-chairman of the papers of international conferences such as GMP 2023 and CAD/Graphics 2017, and the program committee member of conferences such as SIGGRAPH and Eurographics. He serves as the deputy director of the 3D Vision Committee of the Chinese Society of Image and Graphics, the deputy director of the Geometric Design and Computing Committee of the Chinese Society of Industrial and Applied Mathematics, and the director of the Chinese Graphics Society. He has won 2 first prizes of the Hunan Provincial Natural Science Award (ranked 1 and 3 respectively), the first prize of the Natural Science Award of the China Computer Federation (ranked 3), the second prize of the Army Science and Technology Progress Award, and the second prize of the Army Teaching Achievement Award.

Lecture Title:Embodied Intelligence based on Three-dimensional Geometric Perception

Abstract:Visual perception is the most important way for robots to explore, perceive, and understand unknown environments. With the rapid development of 3D sensing technology, 3D graphics are being deeply integrated with robot vision, forming a new way of robot perception and interaction based on 3D geometry, realizing 3D perception and dexterous interaction of robots to unknown environments, and finally supporting robots in 3D environments to achieve embodied intelligence. This report focuses on three aspects of reconstruction, understanding, and interaction, and reports our series of work in recent years, including robust and scalable real-time 3D reconstruction, robot autonomous and cooperative scene scanning and reconstruction, robot active scene understanding, and based on Robot dexterous grasping based on three-dimensional geometric representation learning, etc., and trying to explore the future development direction of embodied intelligence based on three-dimensional geometric perception.

Jie Guo

Nanjing University

Biography:Dr. Jie Guo is an associate researcher in the Department of Computer Science and Technology at Nanjing University. He received his PhD from Nanjing University in 2013. His current research interest is mainly in computer graphics, virtual reality and 3D vision. He has over 70 publications in internationally leading conferences (SIGGRAPH, SIGGRAPH Asia, CVPR, ICCV, ECCV, IEEE VR, etc.) and journals (ACM ToG, IEEE TVCG, IEEE TIP, etc.). He has developed several applications on illumination prediction, material prediction and real-time rendering, which have been widely used in industry and achieved good economic and social benefits. He is the recipient of JSCS Youth Science and Technology Award, JSIE Excellent Young Engineer Award, Huawei Spark Award, 4D ShoeTech Young Scholar Award and Lu Zengyong CAD&CG High-Tech Award.

Lecture Title:Estimating Material Appearance from a Single Image

Abstract:Building a virtual world that is consistent with the real world has always been a goal pursued by researchers in the field of computer graphics. Material estimation techniques are an essential part of this process. In recent years, deep learning has emerged as an important foundational technology that has driven the development of material estimation techniques and accelerated their practical applications. This talk aims to explore the material estimation problem in real-world scenarios and focuses on solving the problem under a lightweight setting that uses a single image as the input.

Visual Information Generation and Credible Identification

Introduction

With the rapid development of artificial intelligence and digital image processing, we are facing the generation and dissemination of large amounts of images, videos, and graphic content. However, the authenticity and credibility of these visual contents have been seriously challenged. Under this circumstance, the generation and credible identification of visual content have become a hot topic in the field of computer vision. First, this forum will delve into visual content generation methods based on technologies such as generative AI. These methods can not only be used in the synthesis of images and videos, but can also be extended to 3D models and animations. What follows is how to ensure the authenticity and legality of the generated content and prevent the spread of false information. This forum will discuss the application of credible identification technology for visual content. Focusing on the credibility of visual content, we review tampering and counterfeiting technologies, analyze the difficulties and hot issues of visual content counterfeiting, and focus on the research work on face authentication and liveness detection in the data layer, content layer and semantic layer. This forum will bring together experts from academia to discuss topics including basic technological progress and analysis in both directions of generation and identification, as well as specific application practices. Visual content generation and credible identification is a research field full of challenges and opportunities. It is also an inevitable trend in the development of content creation and dissemination in the digital era.

Schedule

Sept. 22th 9:00-12:00

Organizer

Nannan Wang

Xidian University

Biography:Nannan Wang is a professor at Xidian University, doctoral supervisor, and deputy director of the National Key Laboratory of Space, Space and Ground Integrated Business Network. In recent years, he has been engaged in research on image cross-domain reconstruction and credible identification. He has published more than 200 papers in international academic journals such as IEEE TPAMI and IJCV and international academic conferences such as CVPR, ICCV, ECCV, ICML, and NeurIPS, and has been granted 30 national invention patents. The remaining projects include 7 patented technology transfers and 3 software copyrights. Related achievements have won the first prize of the Natural Science Award of the Ministry of Education, the first prize of the Shaanxi Provincial Science and Technology Award, and the Outstanding Doctoral Thesis of the China Artificial Intelligence Society. Hosted the National Natural Science Foundation of China Outstanding Youth Fund, joint fund key, general, and youth projects, scientific and technological innovation 2030-“New Generation Artificial Intelligence” major project sub-topics, equipment pre-research-Ministry of Education Joint Fund, etc. Served as the associate-editor-in-chief of the international journal “Visual Computer”.

Co-organizer

Shengsheng Qian

Institute of Automation, Chinese Academy of Sciences

Biography:Shengsheng Qian, associate professor at the Institute of Automation, Chinese Academy of Sciences. In recent years, he has been engaged in research on cross-media reasoning and trustworthiness assessment. He has published 46 papers in IEEE TPAMI and other IEEE/ACM Trans. journals and CCF-A conferences, one of which was selected as ESI Highly Cited. The related results won the 2018 Excellent doctoral Dissertation of Chinese Academy of Sciences, the best paper of ACM Multimedia in 2016, the best paper nomination of ACM Multimedia in 2019, and the best paper of China Multimedia Conference in 2019, etc. He hosted the National Natural Science Foundation of China youth, general, and key project sub-projects, the Chinese Academy of Sciences Special Research Assistant Funding Project, the Tencent WeChat Rhino-Bird Focused Research Program, and the 166 Project.

Lecturers

Jun Zhu

Tsinghua University

Biography:Jun Zhu is Bosch AI Professor in the Department of Computer Science at Tsinghua University, IEEE Fellow, Vice President of the Institute of Artificial Intelligence at Tsinghua University, and former adjunct professor at Carnegie Mellon University. He received his bachelor’s and doctoral degrees from Tsinghua University from 2001 to 2009. He mainly engaged in machine learning research. He served as the deputy editor-in-chief of the internationally renowned journal IEEE TPAMI, and served as senior field chairperson and best paper review committee member for ICML, NeurIPS, ICLR, etc. more than 20 times. He won the Qiushi Outstanding Youth Award of the China Association for Science and Technology, the Scientific Exploration Award, the First Prize of the Natural Science of the Computer Society of China, the First Prize of the Natural Science of Wu Wenjun in Artificial Intelligence, the ICLR International Conference Outstanding Paper Award, etc., and was selected as the Ten Thousand Talents Program Leading Talent, the CCF Young scientist, MIT TR35 Chinese Pioneer, etc. Many doctoral students he has supervised have won the Outstanding Doctoral Thesis of the CCF, the Outstanding Doctoral Thesis of the China Artificial Intelligence Society, and the Tsinghua University Special Scholarship.

Lecture Title:Diffusion Probability Model and its Application

Abstract:AIGC is developing rapidly. The diffusion probability model is one of the key technologies of AIGC. It has made significant progress in cross-modal text and image generation, 3D generation, and video generation. This report will introduce several advances in diffusion probability models, including the basic theory and efficient algorithms of diffusion probability models, large-scale multi-modal diffusion models, text to 3D generation, controllable video generation, etc.

Weihong Deng

Beijing University of Posts and Telecommunications

Biography:Professor at the School of Artificial Intelligence, Beijing University of Posts and Telecommunications, and Young Yangtze Scholar of the Ministry of Education. His research interests include biometric identification, affective computing, and multimodal learning. In recent years, he has presided over more than 20 projects of the National Key R&D Plan and the National Natural Science Foundation of China. He has published more than 100 papers in international journals and conferences such as IEEE TPAMI, IJCV, TIP, ICCV, CVPR, ECCV, etc., and has been cited more than 13,000 times by Google Scholar. He has served as the area chair of CVPR, ECCV, ACM MM, AAAI, IJCAI, and other conferences for many times. He has been selected as Beijing’s Outstanding Doctoral Thesis, Beijing Science and Technology Star, Ministry of Education’s New Century Outstanding Talents, Elsevier China Highly Cited Scholar, etc.

Lecture Title:Fake Visual Content Detection

Abstract:As the most direct way to record objective facts, visual data is irreplaceable in daily life, but it also has problems that cannot be ignored: In the era of booming online media, with breakthroughs in generative technologies such as generative adversarial networks and diffusion models, The authenticity and safety of visual content have become increasingly prominent, attracting great attention from the country to the public. In this tutorial, focusing on the credibility of visual content, we briefly review the generation, tampering and counterfeiting technologies, analyze the difficulties and hot issues of visual content counterfeiting, and focus on our research team’s work on face forgery and liveness detection in the data layer, content layer and semantic layer.

Baoyuan Wu

Chinese University of Hong Kong, Shenzhen

Biography:Dr. Baoyuan Wu is a Tenured Associate Professor of School of Data Science, the Chinese University of Hong Kong, Shenzhen (CUHK-Shenzhen). His research interests are AI security and privacy, machine learning, computer vision and optimization. He has published 70+ top-tier conference and journal papers, including TPAMI, IJCV, NeurIPS, ICML, CVPR, ICCV, ECCV, ICLR, AAAI, and one paper was selected as the Best Paper Finalist of CVPR 2019. He is currently serving as an Associate Editor of Neurocomputing, Organizing Chair of PRCV 2022, Area Chair of CVPR 2024, NeurIPS 2022/2023, NeurIPS Datasets and Benchmarks Track 2023, ICLR 2022/2023/2024, ICML 2023, AAAI 2022/2024, AISTATS 2024, WACV 2024, and ICIG 2021/2023. He was selected into the world’s top 2% scientist list of 2021 and 2022, released by Stanford University.

Lecture Title:Visual AIGC and Its Detection

Abstract:In this tutorial, I will firstly introduce the basics of visual AIGC, including its history, applications, and two mainstream technologies, i.e., GANs and diffusion models. Then, a complete taxonomy will be presented to summarize the main branches of visual AIGC, covering facial and natural images, as well as their applications, with the focus on face forgery. Finally, I will introduce the detection of two types of generated visual contents (image or video), including face forgery and AI generated natural images, covering the main challenges, mainstream detection methods and future trends.